Testing your network for live media delivery – Part 1

Why is Live media Stream Testing so Important?

Whenever we want to launch a new live media stream, regardless if the it is the initial stream or adding a new stream one to an existing line up, we must first confirm that we have adequate upload and download bitrate to sustain the new stream, and then evaluate if the intended network is adequate to support the stream. In the past, video transport was direct and, in a sense, simpler. Transport choices were limited to Dark Fiber, Satellite and Dedicated Paths. But the available methods have advanced and today our many choices include managed and unmanaged networks, public cloud, SDWAN, MPLS, 4G/5G and a combination of available paths regardless of the medium. What do these dynamic network choices have in common? They are ever changing, engaged directly by the end users and enterprise organizations to conduct their daily business. Our new stream needs to share the transport network with unrelated data traffic and we need to understand the network conditions before we begin, then again during the streaming. Much like you would not start a trip before referencing traffic alerts on Google Maps or Waze because you would want to know anticipate any travel congestion, road construction, traffic accidents, and how long the trip will take. But in the streaming world, the transport stream requires similar details every second, minute, hour, day – and your success is dependent upon this knowledge. This is why those with the most invested and exposed in this field deeply engage on monitoring the health of network connectivity, the integrity of transport streams, and supporting the technical teams of network and video technicians responsible for troubleshooting and responding to problems. In this article we will examine what tools are available, what is missing, and an approach we believe that when applied will make your applications perform better.

How are the testing of streams conducted today?

The most well-known technique is ‘let’s run a test stream’, and then evaluate the performance over time. The operator is in control, prepares a reference stream with desired parameters, specifies bitrate, protocol, packet size and sends it to the destination. The results are evaluated by the reception, usually displayed visually on a screen or by a dedicated metric tool or appraisal software. If the bandwidth is available, the operator can obtain a realistic evaluation of what will occur with the live stream performance. If something is wrong it the performance metrics will allow the operator to start evaluating the problem. A lower bitrate stream can be tested, or the root cause of the issue can be traced. In most cases the full picture of the link behavior is not revealed, only an overall performance measurement without additional details. For example, questions not answered by such a test include what would happen if the stream bitrate was increased, or what would happen if additional streams were added?

Speedtest

Most Operators and Users are familiar with the often applied tool, Speedtest (https://www.speedtest.net/). Speedtest is often the primary “goto test” for upload and download speed indicators reflected off remote servers presented in a list. How is the resulting information applied?

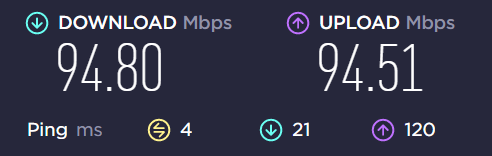

From an example report:

In this example the maximum upload and download speed reflected from and to a selected remote server is demonstrated. But the protocol of choice in this test is typically TCP, the packet size exchanged is unknown, and the resulting latency is displayed as an average. More questions remain like how many packets were lost during the test and how much jitter was observed? How will network connections behave to peer sites that are not listed? These simple answers are not available. Speedtest maybe useful for home applications and to determine connectivity outside of internal networks but not to accurately evaluate a link for heavy streaming or robust performance.

Iperf/Jperf

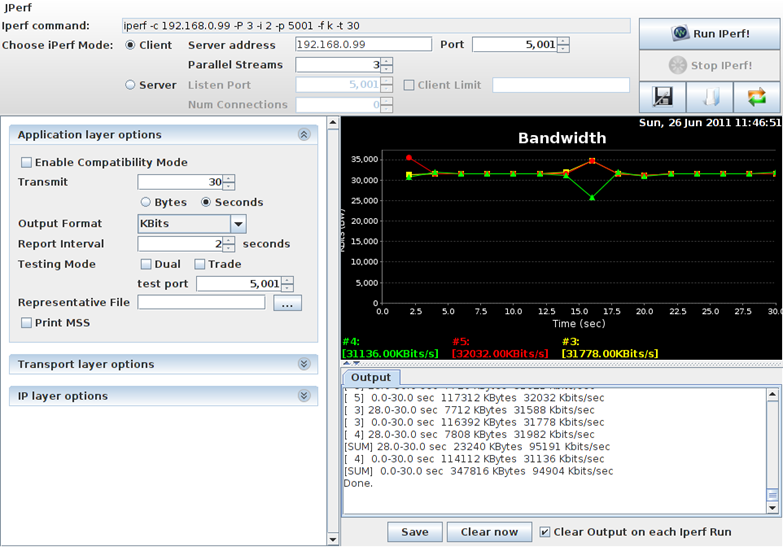

A better and professional tool is iperf for the Command Line Interface (CLI) power users or Jperf for the application users ( I recommend reviewing this: https://turbofuture.com/computers/How-to-Measure-Network-Throughput-Using-JPerf ). Iperf uses a Client at the source and a Server installed at the destination. Iperf listens to connections from one or more Clients and evaluates the number of arriving packets, their bitrate, how many bytes are transferred, the number of lost packets, and the average packet latency.

These tools provide more control over the test, allow the user to conduct a more robust test, and to finetune the test parameters. For example, the user can specify the protocol, single or multiple paths, packet size, target bitrate, the test duration, and configure a bidirectional test.

The results reveal a view of the maximum bitrate the link achieved and if it is higher or lower than the desired target, average jitter, and the number of packets lost during the test.

What continues to be elusive are the minimum and maximum jitter performance and lost packet behavior, revealing if the losses are intermittent, following a pattern, or occurring in bursts. What is also unknown is if the stream is received at a constant rate or in bursts. This information is critical for effective delivery of video over IP. Most Jperf applications do provide visual charts and graphs, but they can give only snapshot view.

What else is missing?

Our observation is that the current testing practices are not sufficient within current and emerging business environments and very specifically the increasing use of unmanaged networks. Local- and Wide-Area Networks traditionally share links with unrelated traffic, regardless of if the path is traversing the Public Internet or within a cloud. The user may not need to know what that traffic is but does require insight into the IP patterns and how that unpredictable data may impact the video transport streams. Is that unknown data contributing only light traffic or contributing to network congestion? Is the competing data originating from the same location or traversing to the same destination as the video streams? Most of these questions remain unanswered by the tools just presented.

There are open and commercially advanced IT monitoring solutions that can inspect IP flows and detect routes but they tend to look at overall network availability and have a very low sampling rate, which fail to detect transient events that often negatively impact a live video stream. What is required is a technique to test and evaluate all parameters and influences in a single solution to provide an in-depth view of the networks actual behavior during the test, detect thresholds where the network degrades and is no longer suitable to reliably transport a content stream. The test must operate ‘without-harm’, implying that the test can be implemented gradually and will halt if excessive packet loss is detected, if latency is increased, if jitter increases, or additional degradation that may cause harm to other data services. The testing needs to be conscious of all available routes and evaluate each one individually, but also uniformly as seamless switching is more frequently applied in network architecture. Accurate testing applications must detect individual route changes and limitations to allow smart decisions for selecting which route to prioritize or to load balance the traffic according to the detected limitations.

In the 2’nd part of this blog we will explore how Alvalinks new StreamTest solution is going indepth into examining the network and demonstrates an overlooked parameter and its impact on the network ability to delivery the Live media.